This blog isn't running on WordPress, Phoenix, or even a static site generator. It's running on a bespoke web framework I wrote in Elixir. I didn't want to pull in a massive framework just to serve some HTML, so I built exactly what I needed—from scratch, minus the HTTP parsing.

I'm primarily a Node developer, but I've been wanting to explore Elixir and the BEAM. This project was the perfect excuse to dive in and see how it handles the basics of the web.

Performance on a Budget

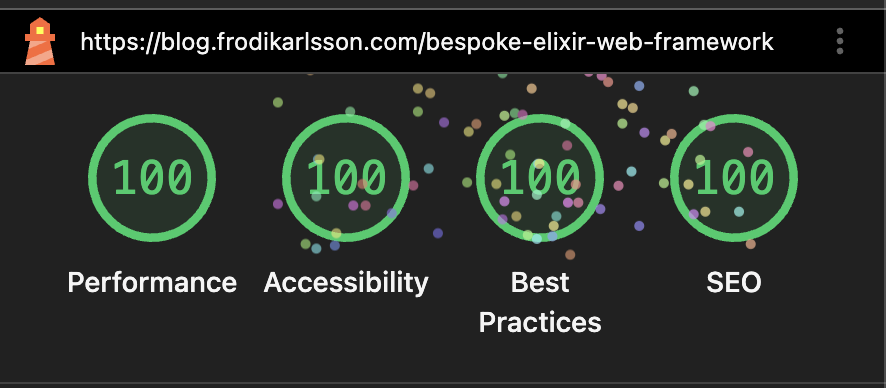

The entire application is hosted on the cheapest DigitalOcean droplet available for just $4 a month. Despite the minimal resources, it achieves literally perfect Lighthouse scores. The combination of Elixir's efficiency and a lack of client-side bloat makes it incredibly fast.

Lighthouse measures perceived performance from a single user's perspective. To see how it holds up under real load, I ran wrk against the live server with 100 concurrent connections over 30 seconds:

wrk -t4 -c100 -d30s http://<server>

Running 30s test

4 threads and 100 connections

Thread Stats Avg Stdev Max +/- Stdev

Latency 54.53ms 34.46ms 368.18ms 93.11%

Req/Sec 496.29 144.31 727.00 80.87%

59130 requests in 30.11s, 148.65MB read

Requests/sec: 1963.99

Transfer/sec: 4.94MBNearly 2,000 requests per second with an average latency under 55ms, on a $4 droplet. The standard deviation is low too, so the average isn't misleading.

For local comparison, I also ran the same wrk load against three routes on the dev server (cached page, a plain send_resp, and a per-request template parse). This isn't a production benchmark, but it makes the cache impact obvious:

Local (localhost:8080, 4 threads, 100 connections, 30s)

Cached page: 25,560 req/s

Plain response: 26,256 req/s

Uncached parse: 3,143 req/sRouting Requests

I used Plug.Router to handle the routing. It's a simple, declarative way to map paths to functions. Here's how the main router is structured:

defmodule Webserver.Router do

use Plug.Router

# ... imports and aliases ...

plug(Plug.RequestId)

plug(Plug.Logger)

plug(Plug.Static, at: "/static", from: {:webserver, "priv/static"})

plug(:match)

plug(:dispatch)

get "/health" do: json(conn, 200, %{status: "ok"})

get "/live-reload", to: Webserver.LiveReload

forward "/admin", to: AdminRouter

forward "/", to: Webserver.Server

endThe Core Server Loop

The main logic for serving pages lives in Webserver.Server. It takes the request path, looks it up in the cache, and returns the parsed HTML. If the page doesn't exist, it handles the 404 gracefully.

def call(conn, _opts) do

path = request_path(conn)

result = try_get_page(path)

case result do

{:ok, parsed} ->

conn

|> put_resp_content_type("text/html")

|> send_resp(200, parsed)

{:error, :not_found} ->

# Render custom 404 page...

end

endCaching with ETS

To keep things fast, I use ETS (Erlang Term Storage) for caching fully rendered HTML. Multiple processes can read from the cache simultaneously without any locking, which is essential for high concurrency.

def get_page(server, path) do

table = table_for(server)

case :ets.lookup(table, {:page, path}) do

[{_, %PageEntry{} = entry}] ->

handle_maybe_stale(table, server, path, entry)

[] ->

GenServer.call(server, {:fetch_and_cache, path})

end

endThe handle_maybe_stale function implements a simple TTL-based revalidation strategy. It checks if a configurable interval has passed since the last check. If it has, the cache revalidates the entry based on the file's mtime on disk.

Stale-While-Revalidate (SWR)

The first version of this cache did the revalidation synchronously on the request path. That keeps correctness simple, but it also means every "stale" request gets serialized through a single GenServer and can block behind filesystem reads + parsing. Under enough traffic, that becomes tail latency (or timeouts) even though we already have perfectly good HTML sitting in ETS.

The current approach is stale-while-revalidate: when a cached entry is due for a check, the server returns the cached HTML immediately and kicks off a background revalidation that updates ETS if the file changed.

Benefits: no request ever blocks on revalidation work, so latency is predictable and the cache stays responsive under load. It also naturally smooths out bursts because revalidation happens at most once per page per interval (deduped).

Trade-offs: the HTML can be briefly stale (bounded by the check interval) and background failures don't surface to the user directly. Instead, you monitor revalidation error counters and logs.

A Custom Templating Language

I wrote a tiny templating language for this project. The honest reason is that I really enjoy building parsers and state machines in Elixir (I'm doing something similar on my chess project). It supports partials, named slots, and lightweight attributes.

<% layout.html title="My Page" %>

<slot:title>My Page</slot:title>

<slot:body>

<% header.html %/>

<p>Content goes here.</p>

</slot:body>

<%/ layout.html %>The parser is a simple tag scanner: it walks the input, finds <% ... %> tags, and renders depth-first so child content is fully resolved before the parent partial is applied. Slots are passed via <slot:name>...</slot:name> and injected into the partial with {{name}}. Attributes are passed on the component tag and injected with {{@attr}} (and missing required attrs are treated as an error, which makes mistakes loud).

Static assets are referenced with {{+ /static/...}} placeholders which resolve through the digested asset manifest in production (and pass through as-is in development). That keeps templates readable while still getting long-cache hashed filenames.

For images, there is a dedicated <% img ... %/> tag. It validates that the source is a real /static image, looks up width/height from a build-generated assets_meta.json, and emits responsive srcset. If a WebP variant exists it wraps the output in a <picture> with a WebP <source>.

That means the HTML gets correct intrinsic sizing (no CLS), and the browser can pick an appropriately sized image.

Static Assets and Build Pipeline

There is an explicit asset build step that does a bit more than just copying files. In production it generates responsive raster variants (via vips), generates WebP variants (via cwebp), writes assets_meta.json for dimensions, and then digests the output for cache busting. The build is strict in production: missing tooling or failed conversions will fail the build instead of silently shipping a broken page.

Live Reload

For a smooth development experience, I implemented live reloading. The server watches the filesystem using fs. The browser keeps an SSE connection open, and when a change is detected, the server sends a signal to reload the page or swap CSS in place.

Hosting and Deployment

Deployment fully automated with GitHub Actions and Docker. When I push to main, an image is built and pushed to GHCR. On the droplet, Watchtower detects the new image and restarts the container within 30 seconds. No manual intervention required.

Because image variants are generated during the build, the Docker image also includes the required native tools (libvips and webp) so production builds are reproducible.

After the first deploy I hit an annoying issue: the container would slowly degrade and eventually start returning internal 503s. The root cause turned out to be the Docker HEALTHCHECK. I originally used ./bin/webserver eval "IO.puts(:ok)", which spawns a separate BEAM instance every check. On a tiny droplet that can time out and create unnecessary pressure. The fix was to switch the healthcheck to a simple HTTP probe against /health.

Why Not Phoenix?

I've never actually used Phoenix, but from what I've heard it's one of the best web frameworks out there, not just in Elixir. If I were starting a real project I'd probably reach for it.

But I wanted to learn, not ship. I had just finished the Mix and OTP guide, which builds up a TCP server from scratch. It's a long guide and covers a lot of ground. Jumping straight to Phoenix after that felt like skipping steps. Plug sits in between: it handles HTTP without making all the other decisions for you.

Having to figure out caching, static files, and templates myself meant I actually understood what I was building. That's the point.

Elixir vs Node

Most of my professional work is in TypeScript and Node. They are great tools, but they feel very different from Elixir. What I enjoy most is how the BEAM makes concurrency so effortless. I don't have to worry about mutexes or thread pools. Running Node safely can also be tricky because JavaScript grew up in the browser where failing silently is common, not in a runtime built for error-proof systems.

Building this bespoke framework over the last few days has been a great way to learn Elixir. It's a powerful, elegant language that makes building resilient systems feel natural. It's definitely a tool I'll be reaching for again.

You can even view the source for this very page on GitHub to see how the templating works in practice.